Can AI Fatigue Monitoring Reduce Human Error in Night-Time Driving Conditions?

Driving at night is a different animal. Visibility drops, reaction times slow, and our internal clocks push us toward sleep. In environments like ports, mining yards, logistics hubs and long highway hauls, those risks become operational and financial hazards. This post walks through what AI-Based Driver Fatigue Monitoring Systems does, how it performs in real settings, and how it pairs with vehicle camera systems to make night work safer — in plain, easy-to-read language.

1. Why night driving raises the stakes

Night driving compresses risk into a few simple problems: lower visibility, higher fatigue, and delayed responses. When ambient light drops, drivers rely more on limited cues — headlight beams, reflective paint, and whatever they can see on the dashboard. That reduces the margin for error in split-second situations.

Add fatigue. Studies and crash data show drowsy drivers are a real contributor to collisions: many thousands of police-reported crashes involve fatigue each year, and hundreds of fatalities are linked to drowsy driving annually. These are not distant, theoretical numbers — they represent people and costly downtime for fleets.

Finally, night operations are common in industries like ports and logistics. Heavy vehicles moving in low light, coupled with tight schedules, make even small lapses dangerous. That’s why many fleet operators are looking at technical solutions, not just policy changes.

2. What is an AI driver state monitoring system and how does it work?

At its core, an AI driver state monitoring system watches the driver. Cameras and sensors track facial features, eye closure, gaze direction, head nods, and sometimes steering or pedal patterns. Onboard software uses these inputs to estimate fatigue, distraction, or inattention in real time.

The AI models behind these systems are trained on thousands of hours of footage and a wide array of behaviors, so they can distinguish normal quick glances from dangerous microsleep episodes. When a risky pattern appears — long eye closures, repeated yawning, or fixed gaze away from the road — the system triggers alerts: audible alarms, seat vibrations, or messages to a fleet manager.

Crucially, these systems can be tuned for different environments. Mining trucks and port cranes use ruggedized sensors and infrared lighting to work through sunglasses or in very low light; passenger car systems lean more on visible-light cameras. Combined, they create a continuous safety layer during night shifts.

3. The hard numbers: can monitoring actually reduce crashes?

Real-world results are promising. Some operator reports and vendor studies in heavy industries claim reductions in “fatigue events” of 70–90% after adding eye-tracking and driver monitoring tech — especially where alerts are paired with enforced rest breaks or supervisor intervention. Those figures come from deployments in high-risk sectors such as mining and long-haul trucking.

Other authoritative reviews and field studies show consistent detection performance improvements, though results vary by technology type and operating conditions. Independent testing has highlighted that systems which combine direct (camera-based) and indirect (vehicle behavior) monitoring modes perform best at catching different kinds of disengagement.

Put practically: if a fleet sees a 30–50% reduction in incidents caused by delayed reaction or inattention at night, the ROI can appear quickly — fewer repairs, less downtime, fewer injury claims, and potentially lower insurance premiums.

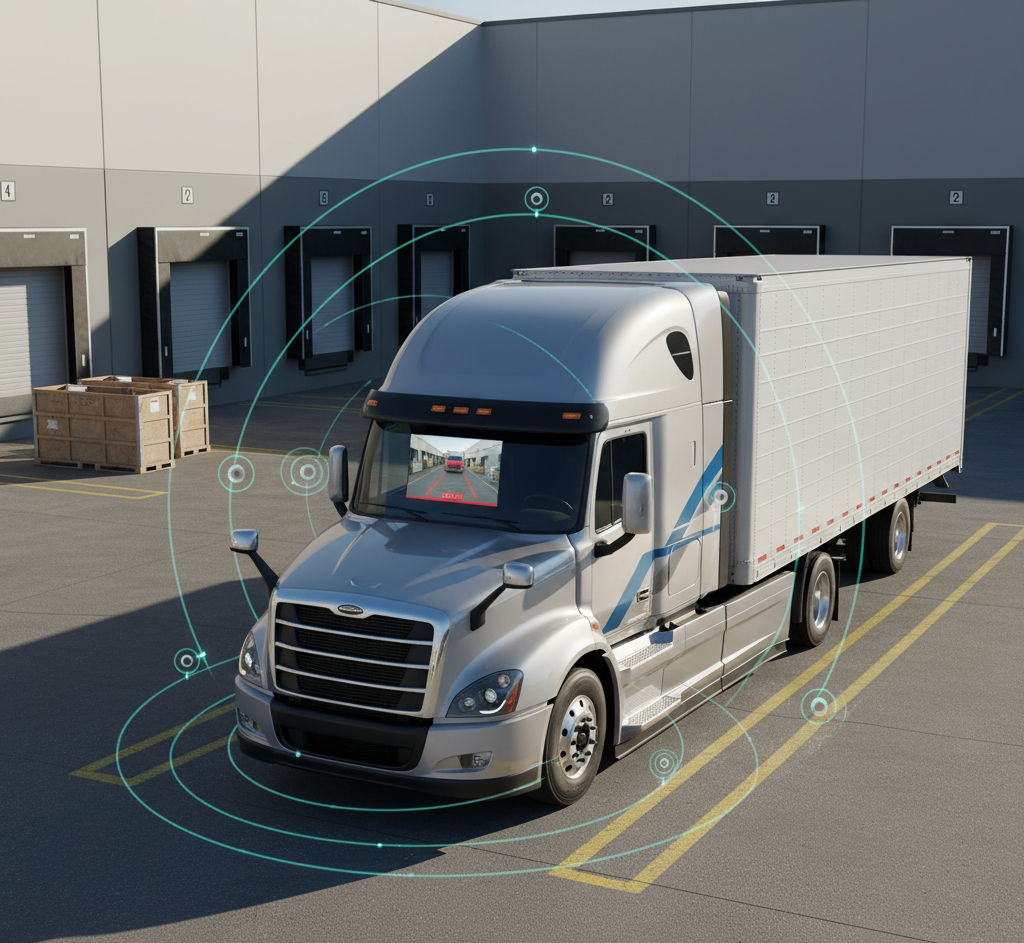

4. Cameras and visibility: 360 degree vehicle camera system and rear view camera systems

AI monitoring is powerful, but it’s even more effective when paired with robust external visibility tools. A 360 degree vehicle camera system offers a bird’s-eye view around the truck or forklift, removing blind spots and recording events from all angles. This makes it easier for a fatigued or distracted driver to spot a pedestrian or obstacle they might otherwise miss.

Rear view camera systems play a specific — but critical — role in preventing back-over incidents. Back-over crashes still cause hundreds of fatalities and thousands of injuries each year; adding rear cameras and sensors has been shown to reduce these kinds of low-speed, high-consequence crashes. In many countries, backup cameras are now mandated because the safety benefit is clear.

Combine a driver state monitoring system with 360° and rear-view coverage and you get a layered approach: the AI watches the driver, external cameras watch the environment, and together they create both prevention (alerts) and post-event evidence (video) that can improve training and liability outcomes.

5. Real-world integration: how fleets make the tech work at night

Implementation matters. A few technical points fleet managers consider: camera placement (to minimize glare), sensor calibration for low temperatures and dust, and data bandwidth for transmitting alerts or footage. Night lighting and infrared capability are essential so the systems remain reliable after dark.

Training is equally important. Drivers need to trust the system; if alerts are overly sensitive they get ignored. The best deployments tie alerts to human processes: an initial alarm, then a supervisor check, then mandatory rest if needed. When AI is coupled with clear procedures, compliance rises and fatigue-related incidents drop.

Imagine a fuel tanker reversing into a crowded depot. Without a 360-degree view, the driver relies on mirrors and a rear camera, which can’t detect workers standing close to the sides. One wrong move could lead to property damage or a human tragedy.

Finally, maintenance keeps the system effective. Dirty lenses, damaged cables, or outdated firmware blunt detection. Regular health checks, which many modern 360° camera systems provide automatically, keep the tech running and the data trustworthy.

6. Challenges, limits, and ethical considerations

No technology is perfect. AI systems can struggle with unusual faces, extreme lighting, or drivers wearing very large sunglasses. False positives (alerts when the driver is fine) and false negatives both erode confidence. That’s why multi-sensor solutions and continuous model updates matter.

Privacy and data governance are also front of mind. Video and behavioral data are sensitive; clear policies on storage duration, access controls, and use (safety and training only, not punitive surveillance) help balance safety with rights. Operators should be transparent with staff about what is collected and why.

Cost is another barrier, especially for small fleets. But consider this: the cost of one preventable night collision — vehicle repair, lost shifts, and possible injury claims — can eclipse the upfront price of a modern monitoring + camera package. Smart financing and staged rollouts help make adoption realistic.

Driving Safety Forward with MyPort Services India Pvt Ltd

MyPort Services India Pvt Ltd supplies heavy-duty automotive safety and advanced warning systems to mining, ports, logistics, and warehousing — backed by 15 years of proven installations. Combining a robust driver state monitoring system with a 360 degree vehicle camera system and reliable rear view camera systems creates a layered safety net that reduces night-time human error, protects people, and lowers operational costs. If you operate heavy vehicles at night and want to cut fatigue-related risk, talk to MyPort Services India Pvt Ltd for a site assessment and tailored solution.